Design Patterns for Agentic AI: Coordination

How multiple agents coordinate decisions || Edition 23

This post is part 5 of the Agentic AI Series — a multi-part exploration of how autonomous systems are reshaping enterprise architecture, governance, and security.

The Short Version

Coordination solves the integration problem. Multi-agent systems need a blueprint to function as a single unit. When tasks demand specialization or speed, coordination patterns define how separate agents work together without losing coherence.

Control location determines the pattern. Hierarchical centralizes control in an orchestrator that delegates to workers. Sequential distributes control in stage-by-stage handoffs. Parallel dispatches work simultaneously, then reconciles outputs through an aggregator.

Each pattern encodes unique failure modes. Hierarchical creates bottlenecks and single points of failure. Sequential risks cascade failure if one stage breaks. Parallel struggles with reconciliation when outputs conflict.

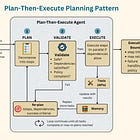

Coordination sits above individual planning. A Hierarchical orchestrator might use Plan-and-Execute while its workers use ReAct for their sub-tasks. Coordination is the macro layer; planning patterns operate within each agent.

Multi-agent systems expand the attack surface. Every agent added multiplies cross-cutting concerns—state synchronization, async communication, credit assignment—that require governance controls.

Coordinating Multiple Agents

Scaling beyond a single agent introduces three recurring constraints: how decisions are distributed, how information flows, and how failures propagate.

Authority is ambiguous: A Loan Underwriting system delegates to three specialists. The Asset agent flags a valuation discrepancy; the Credit agent recommends approval. Without arbitration, the orchestrator "splits the difference", producing a high-risk approval that violates internal thresholds.

Stage-gated pipelines can cascade: A Procurement pipeline moves Invoice Extraction → Contract Matching → Payment Approval. The Extraction agent returns null on an unfamiliar format. The entire pipeline halts waiting for input that never arrives.

Parallel outputs can diverge: Three Marketing agents analyze a launch: Growth, Profitability, Brand Safety. Growth recommends aggressive discounts; Profitability recommends premium pricing. Without reconciliation, the output is a hybrid strategy that achieves neither goal.

These are coordination failures. Specialization, parallelism, and separation of concerns push architectures toward multiple agents operating simultaneously.

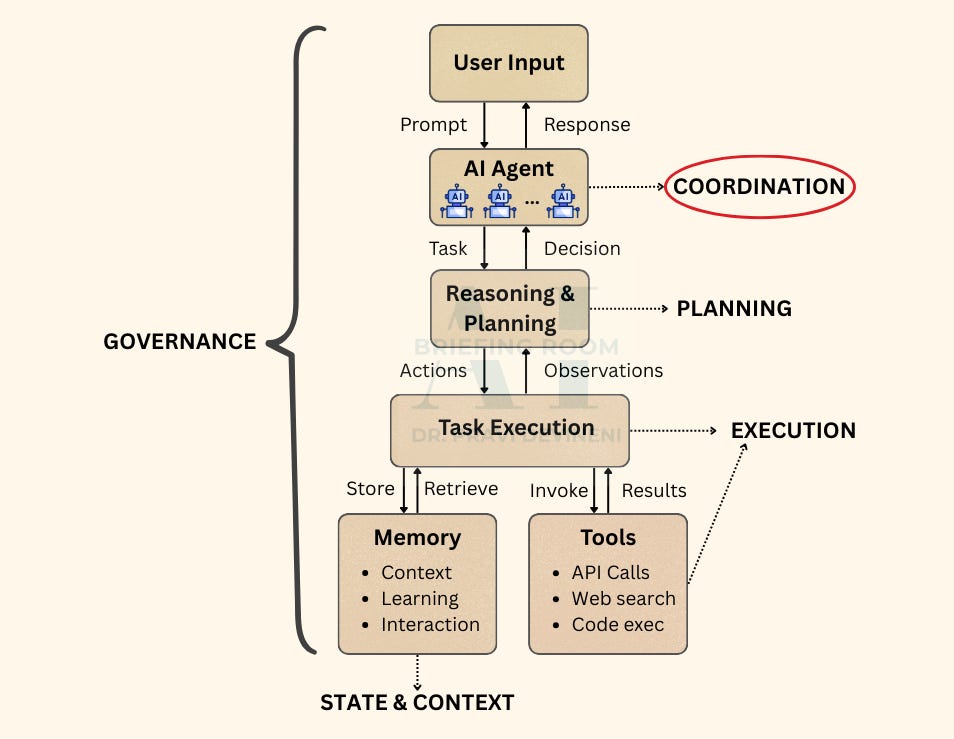

If you’ve read the base architecture post, you already have the layers. This post zooms into the Orchestration layer, where independent agents must act as one.

When AI Acts: The Architecture Behind Agentic AI

The post architecture post establishes the foundational layers of an agentic AI system: how context is assembled, how plans are formed, how actions are orchestrated, and how outcomes flow back into the system.

This creates a different problem: How do multiple agents work together without losing coherence, blocking progress, or amplifying failure?

Three architectural choices define how coordination behaves:

Control distribution: Who decides what happens next—a central coordinator, the previous stage, or collective agreement?

Information flow: How outputs move—hub-and-spoke, linear handoff, or broadcast-and-merge?

Failure isolation: What happens when an agent fails—does it cascade, block the system, or get absorbed?

These choices give rise to three fundamental coordination patterns:

Hierarchical when authority must be centralized and workers are specialized

Sequential when work naturally flows through ordered stages

Parallel when independent perspectives must be reconciled

More complex architectures such as hierarchical trees, mesh networks, dynamic swarms are compositions of these primitives.

Coordination Patterns

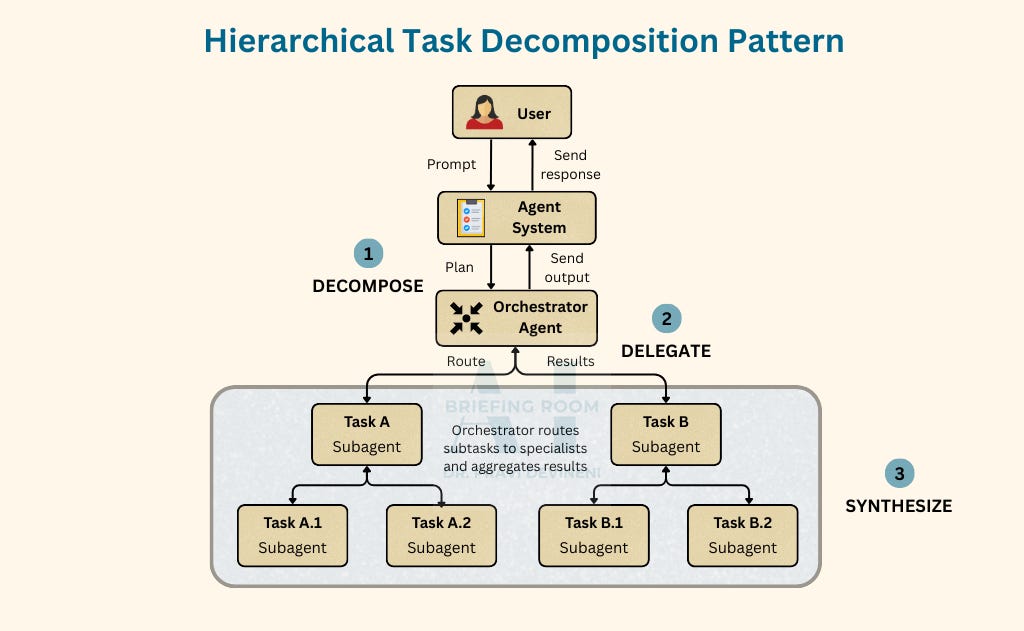

Pattern 1: Hierarchical

The centralized delegation workflow

Hierarchical is a recursive implementation of the coordinator pattern. A coordinator agent receives a complex task and decomposes it into sub-tasks, delegating each to a specialist at the next level. This can repeat—agents progressively decompose until tasks are simple enough to execute directly. Results flow back up; each level synthesizes outputs from its children. Workers don't communicate laterally—all coordination flows through parent-child relationships.

Decision authority: The parent arbitrates. Workers escalate conflicts; every decision traces to a node in the hierarchy.

When to Use

Clear role boundaries: Each worker has a distinct specialty (research, writing, code, analysis)

Diverse capabilities needed: The task requires skills no single agent possesses

Dynamic routing required: The orchestrator must decide which worker handles what based on task content

Result synthesis needed: Individual worker outputs must be combined into a coherent whole

Tradeoffs

Governance implications: The orchestrator is a high-value target. If compromised, it controls task routing, result aggregation, and worker permissions. Identity verification between orchestrator and workers is the primary trust boundary.

Pattern 2: Sequential

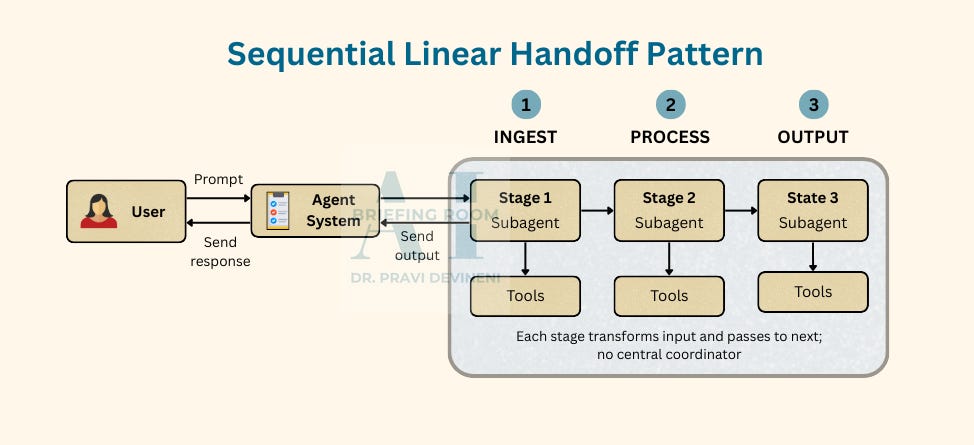

The linear handoff workflow

Agents arrange in a linear sequence where each stage transforms input and passes output to the next. There’s no central coordinator—the flow is predetermined by structure. Each stage only knows its input contract and output contract; stages don’t need global awareness.

Decision authority: Each stage owns its output. No arbiter exists—validation at handoff boundaries catches conflicts before they cascade.

When to Use

Linear transformation: Each stage adds distinct value in sequence

Clear handoff contracts: Input/output formats are well-defined between stages

Separation of concerns: Each stage can be developed, tested, and scaled independently

Auditability required: You need to trace exactly which stage produced which transformation

Tradeoffs

Governance implications: Handoff integrity is the critical control. If a previous stage is manipulated by injecting malicious content, the next stages inherit it. Each handoff is a validation checkpoint or an attack surface.

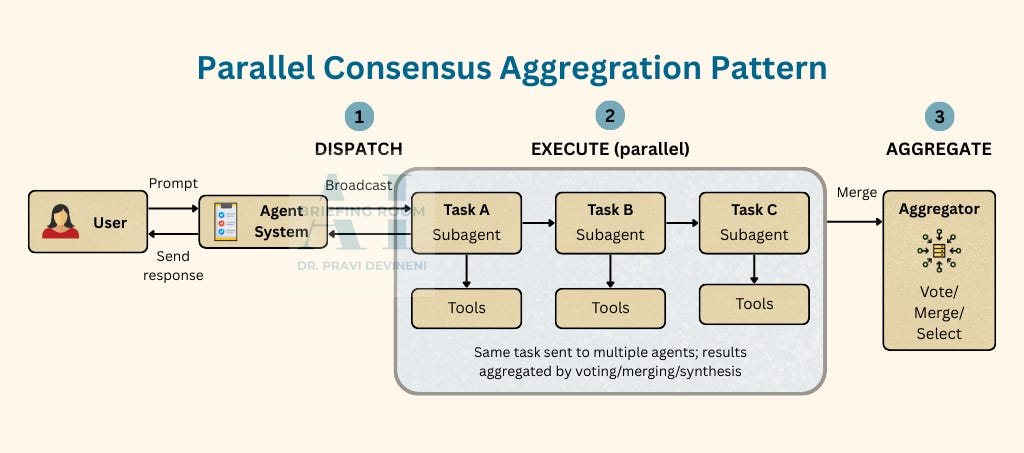

Pattern 3: Parallel

The consensus aggregation workflow

Parallel dispatches the same task (or task variants) to multiple agents simultaneously. Each agent works independently, then results are aggregated through voting, averaging, selection, or synthesis. No agent has authority over another—consensus emerges from aggregation logic.

The key insight: redundancy provides resilience and diversity provides coverage. If one agent fails or produces low-quality output, the aggregation can still succeed.

When to Use

Consensus needed: Multiple perspectives reduce individual agent bias or error

Redundancy for reliability: If one agent fails, others can compensate

Diverse approaches valuable: Different models or prompts may excel at different aspects

Uncertainty quantification: Disagreement between agents signals low confidence

Tradeoffs

Governance implications: The aggregator is the trust anchor. Voting-based aggregation can be gamed if an attacker compromises multiple agents. Weighted voting, provenance tracking, and anomaly detection on agent outputs become critical controls.

Cross-Cutting: Agent Communication

Regardless of pattern, multi-agent systems require decisions about how agents communicate—how agents talk, share, and verify each other.

Message formats: Structured schemas (JSON, protocol buffers) enable validation; natural language enables flexibility.

State sharing: Shared memory simplifies coordination but creates race conditions. Message passing is explicit but adds overhead.

Identity verification: How does an orchestrator know a response came from a legitimate worker? Authentication must be designed in.

Beyond communication, multi-agent systems share operational realities.

State Management: Context gets lost between agents. Where does shared state live, and who owns it?

Async Communication: Fast agents wait for slow ones; timeouts cascade. What’s your timeout policy, and what happens on partial results?

Failure Handling: One agent fails; the workflow corrupts or stalls. Do you retry, reassign, or fail fast?

Credit Assignment: You can’t determine which agent caused success or failure. How do you attribute outcomes for debugging, cost, or improvement?

When to Use What

In Closing

Choosing a coordination workflow is choosing your topology. Hierarchical centralizes authority and simplifies routing at the cost of concentrated risk. Sequential linearizes flow and enables auditability at the cost of rigidity. Parallel distributes for resilience at the cost of aggregation complexity.

The right choice depends on how control should flow, how failure should propagate, and how much you can trust individual agents.

Multi-agent systems multiply governance surface area. Every inter-agent message is a trust decision.

The next part addresses the governance workflows that make coordination safe: human-in-the-loop gates, capability scoping, trust boundary management, and audit provenance.