Design Patterns for Agentic AI: Planning

How agents translate context into action || Edition 22

This post is part 4 of the Agentic AI Series — a multi-part exploration of how autonomous systems are reshaping enterprise architecture, governance, and security.

The Short Version

Planning is an architectural choice. ReAct, Plan-Then-Execute, and Reflection are distinct strategies for turning context into action.

Information availability drives strategy selection. ReAct learns by acting, Plan-Then-Execute defines the full sequence of steps upfront, Reflection improves quality by critiquing and refining a draft.

Chain-of-Thought improves reasoning, not execution. It makes the decision logic more explainable and reviewable, but it doesn’t control tool use, step order, or termination.

Each strategy trades off differently. ReAct risks runaway loops without bounds, Plan-Then-Execute breaks when assumptions change mid-execution, and Reflection stalls when critique quality is weak or termination thresholds are undefined.

Workflows determine governance controls. ReAct requires iteration limits and tool-call quotas, Plan-Then-Execute enables upfront validation/approval gates, and Reflection depends on critique integrity and convergence thresholds.

Planning Under Constraints

Planning isn’t about generating a linear “to-do” list. In a production environment, an agent’s plan is a living strategy that must survive three specific types of friction:

Conditions change mid-execution: A travel agent is rebooking a cancelled flight. By the time it confirms the passenger’s credit card, the seat is gone. The plan failed because the environment was faster than the execution.

Information is discovered through action: A cybersecurity agent detects an anomaly. It cannot “plan” the remediation upfront because it doesn’t know the type of attack yet. It must execute step by step and trade action for information.

Quality matters more than first-pass speed: A legal agent generates a liability clause. While the first draft looks statistically plausible, it needs to pause, critique its own logic against a safety policy, and rewrite the narrative until it meets a defensible standard.

This is the planning problem: how agents translate context into action.

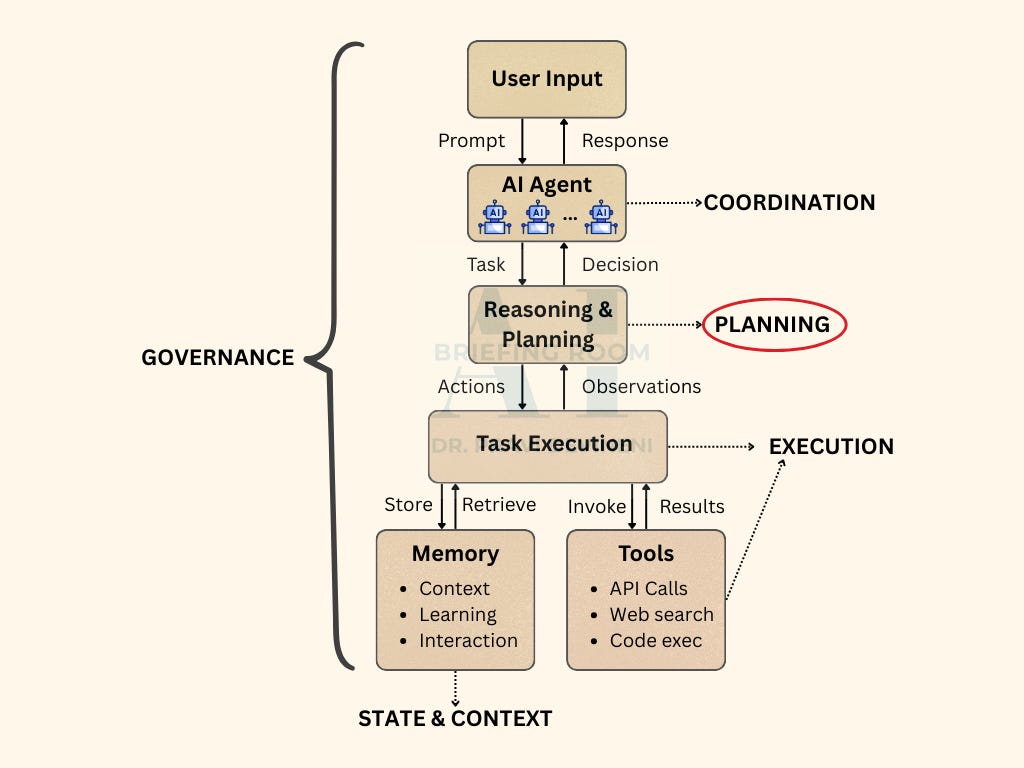

If you’ve read the base architecture post, you already have the layers. This post zooms into the Agent layer—the point where context becomes a plan.

When AI Acts: The Architecture Behind Agentic AI

The post architecture post establishes the foundational layers of an agentic AI system: how context is assembled, how plans are formed, how actions are orchestrated, and how outcomes flow back into the system.

When selecting a planning strategy, you are essentially balancing three core tradeoffs:

Information availability: Do you have enough context upfront, or must you discover it through action?

Output requirements: Is first-pass quality sufficient, or does the task demand iterative refinement?

Failure tolerance: Can you afford exploration, or do you need predictable execution?

These questions map to three planning workflows:

ReAct when information must be discovered through action

Plan-Then-Execute when the workflow is knowable and benefits from upfront structure

Reflection when quality depends on critique and iteration

Everything else is a variant or composition of these primitives. Self-Ask is ReAct with a prompting style. Tree-of-Thought is Reflection with branching. The names change; the underlying workflow doesn’t.

Planning Workflows

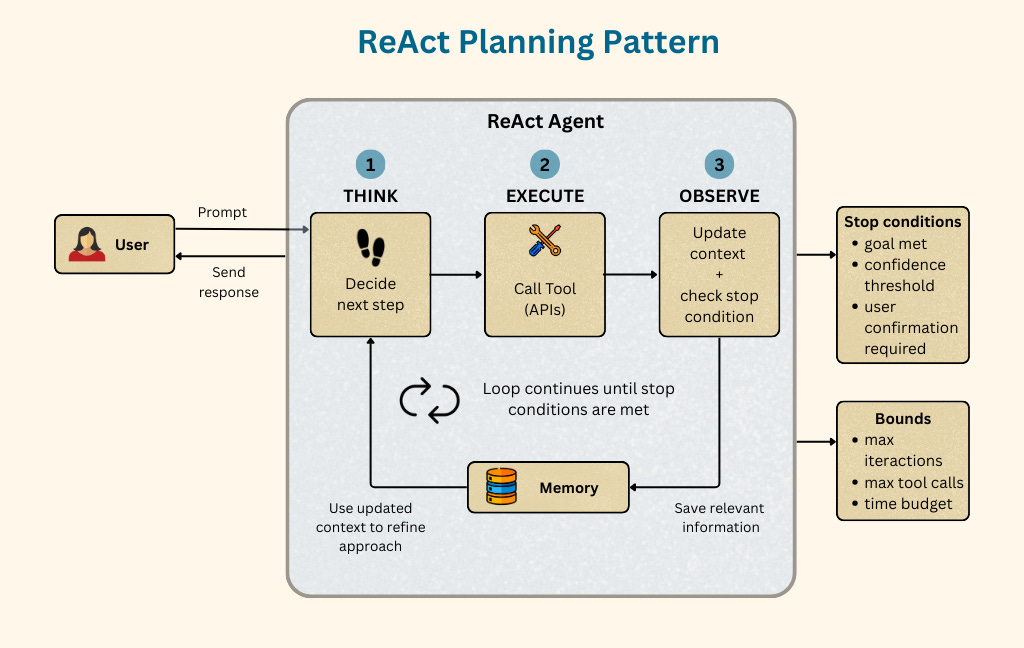

Pattern 1: ReAct (Reason + Action)

The iterative exploration workflow

ReAct interleaves decision-making, action, and observation in a continuous, bounded loop. The agent decides the next step, executes a tool call, observes the outcome, updates its working context, and repeats until a termination condition is met.

The key insight: Each observation becomes new context that shapes the next decision, allowing the agent to trade action for information in uncertain or dynamic environments—while remaining constrained by explicit stop conditions and iteration bounds.

When to use:

Unknown paths: You can’t predict what information you’ll need until you start exploring

Environmental feedback required: Actions produce observations that shape next steps

Tool discovery: The agent must probe systems to understand what’s available

Dynamic contexts: Conditions change during execution

Tradeoffs

Governance implications: ReAct's iterative nature means more tool invocations, more observations ingested, more opportunities for prompt injection or tool misuse. Iteration bounds aren't optional—they're a security control.

Pattern 2: Plan-Then-Execute

The structured decomposition workflow

Plan-Then-Execute separates planning from execution into distinct phases. First, the agent decomposes the task into a complete plan with defined steps. Then, optionally, it validates that plan against constraints. Finally, it executes each step—potentially in parallel if dependencies allow.

The key insight: validation happens before execution, not during. This is where you catch unreasonable plans, missing steps, or unsafe actions—before tools are invoked.

When to Use

Known structure: The task type is familiar; you can anticipate required steps

Parallelizable work: Independent steps can execute concurrently

Validation required: Plans must pass safety or feasibility checks before execution

Predictable resource needs: You want to estimate cost/time upfront

Tradeoffs

Governance implications: The validation phase is a natural checkpoint for human-in-the-loop review. Plans are auditable artifacts. But the weakness is rigidity—if step 3 fails, the plan may not account for graceful recovery.

Pattern 3: Reflection

The quality-refinement workflow

Reflection generates an initial output, then critiques that output against requirements or quality criteria, then refines based on the critique. This loop repeats until the output meets a threshold or iteration limits are reached.

The key insight: the critique is an explicit evaluation step, not implicit in generation. This separation allows different prompts, different models, or even human review for the critique phase.

When to Use

Quality-critical outputs: First draft isn’t good enough; refinement is expected

Complex requirements: Multiple criteria that are hard to satisfy in one pass

Subjective evaluation: “Better” requires judgment, not just correctness

High-stakes deliverables: The cost of a bad output justifies iteration overhead

Tradeoffs

Governance implications: Without iteration limits, Reflection can consume unbounded resources. The critique step can also be a target—if an attacker influences the evaluation criteria, they steer the output. Critique prompts need the same hardening as generation prompts.

Cross-Cutting: Chain-of-Thought Prompting

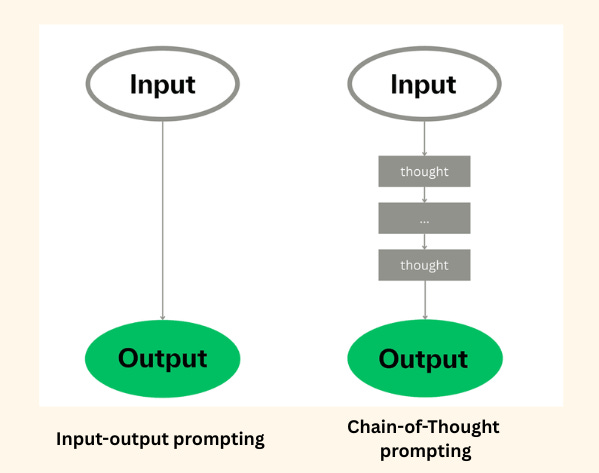

Chain-of-Thought (CoT) is not a workflow, it’s a prompting technique that enhances any workflow.

CoT instructs the model to externalize intermediate reasoning steps before producing output — "Think step by step" or "Show your work." CoT improves output quality by forcing structured reasoning within a single generation, often improving correctness and auditability. It is most useful when:

Task requires multi-step reasoning (math, logic, policy interpretation)

Intermediate conclusions matter as much as the final answer

You need reviewable or auditable reasoning traces for assurance or debugging

How it applies:

In ReAct: CoT strengthens each Think step by making the agent’s decision logic explicit before an action is taken.

In Plan-Then-Execute: CoT improves planning phase by making task decomposition explicit and reviewable.

In Reflection: Both generation and critique use CoT. It makes evaluation criteria explicit, which improves refinement quality.

CoT happens inside an LLM call. Planning workflows coordinate across calls.

When to Use What

In Closing

Choosing a planning workflow is choosing your tradeoffs. ReAct accepts iteration risk for exploration flexibility. Plan-Then-Execute trades adaptability for validation and parallelization. Reflection invests latency to achieve quality that first-pass generation cannot.

The right choice depends on what you know upfront, what quality you require, and which failure modes you can tolerate.

The planning pattern you choose determines your governance options downstream.

The next part in this series addresses what happens when agents must coordinate. The coordination patterns build on these primitives—a Supervisor might use Plan-Then-Execute internally while its workers employ ReAct. Understanding both layers is how you design systems that scale.