When AI Acts: The Architecture Behind Agentic AI

How perception, reasoning, and orchestration connect to turn intelligence into action || Edition 21

This post is part 3 of the Agentic AI Series — a multi-part exploration of how autonomous systems are reshaping enterprise architecture, governance, and security.

The Short Version

Agentic AI is a system, not a model. Four layers—Data, Agent, Orchestration, System cooperate to move evidence → intent → actions → outcomes.

Perception → Reasoning → Orchestration is agency in practice: Data perceives the world, the Agent decides what should happen, and Orchestration carries it out under the right guardrails.

Interfaces make the hand-offs real. Each stage passes a named artifact—context, plan, action package, outcome, so whatever crosses the boundary can be validated, logged, and understood later.

Different kinds of memory play different roles. Working memory supports this plan, process memory tracks the run, short-term memory stays with the session, and long-term memory preserves what’s worth keeping.

Risk is architectural. As you add tools, data sources, and autonomy, interactions multiply, and so do edge cases. Start with structure.

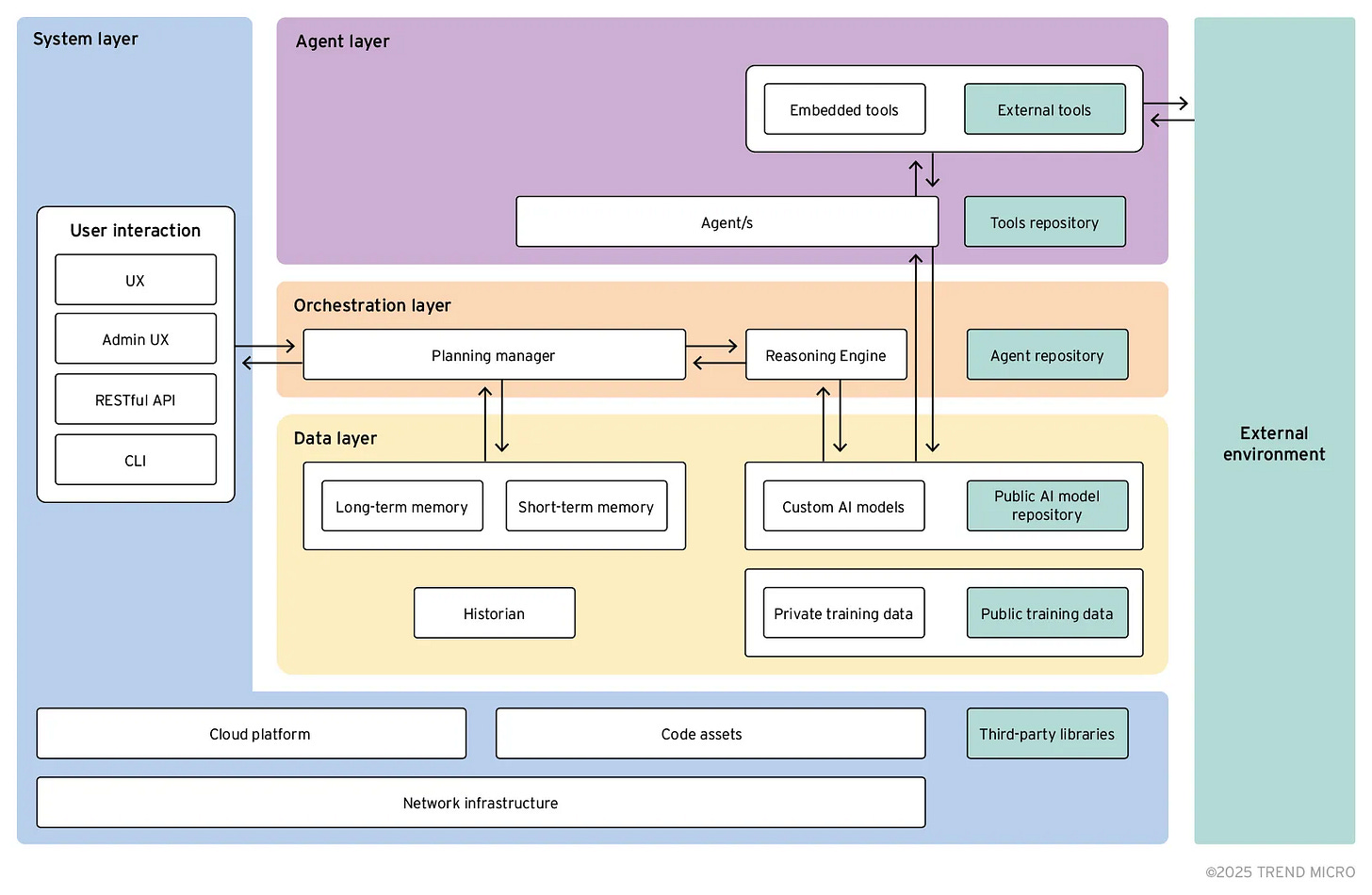

The Agentic AI System Stack

Building agentic AI means designing a system, not configuring a model. This reference architecture defines the four layers and four contracts that turn perception into reasoning, reasoning into safe execution, and execution into organizational learning.

The Base Architecture: Four Layers, Four Contracts

Modern agentic AI systems organize around four distinct layers, each with specific responsibilities. These layers, Data, Agent, Orchestration, and System, aren’t just conceptual boundaries, they’re explicit architectural layers with defined interfaces between them.

What’s a contract? In Agentic AI architecture, a contract is a named, structured hand-off between layers that defines exactly what crosses each boundary: required fields and their guarantees.

For example, when Data sends Context to Agent, both know the structure: facts with provenance, freshness, gaps, and confidence. These four contracts make the system testable and auditable—each layer’s output validates independently.

Layer 1: Data — Perceive What’s True

Makes the world understandable by collecting signals and turning them into a small bundle of useful facts for the task at hand.

What it contains:

Ingestion & normalization: connectors to apps, documents, logs, sensors; parsing and deduplication

Semantic layer: shared dictionary of business terms, entities, and relationships that keeps meaning consistent across sources

Targeted retrieval: fetches just-enough evidence with provenance and freshness attached

Memory:

Short-term: session notes, assumptions, recent outcomes (expires)

Long-term: curated lessons, glossaries, playbooks, proven patterns

Historian: event log of raw outcomes for audit and observability

How it connects:

Sends → Agent: A concise context package with facts, provenance (where they came from), freshness (how recent), and explicit unknowns (what’s still missing)

Receives ← System: Outcomes of actions to update notes and lessons

Answers → Agent/Orchestration: Follow-up lookups when more facts are needed

Primary contracts: Context (to Agent), Outcomes (from System)

Layer 2: Agent — Decide What Should Happen

Decides what should happen next by reading the facts and writing a clear, bounded plan people and machines can follow.

What it contains:

Planner: creates numbered steps with dependencies, success checks, and a brief reasoning note

Guardrails per step: preconditions (what must be true before), postconditions (what success means), reversals (how to undo)

Limits & stop rules: depth/time/cost budgets, “good-enough” criteria, and when to ask a human

Working memory: scratch space for this plan’s assumptions, interim results, and open questions (cleared on completion)

Embedded tools: simple local helpers (calculator, parser, small lookups) for deterministic work

How it connects:

Receives ← Data: Context package (facts + gaps)

Sends → Orchestration: The plan (steps, limits, checks, undo paths)

Asks → Data: Targeted follow-ups to fill gaps

Reads ← System/Orchestration: Results to adjust or finish the plan

Primary contract: Plan (to Orchestration)

Layer 3: Orchestration — Control Safe Execution

Turns a plan into a safe, step-by-step run by picking the right tools, ordering the steps, and keeping everything within limits.

What it contains:

Tool directory: catalog of available tools with their inputs/outputs, preconditions, postconditions, side effects, and reversals

Router/binder: matches each plan step to concrete tools using the directory’s contracts

Runner/workflow engine: executes steps as a graph with dependencies, parallelism, retries, and joins

Compensation manager: defines and executes undo paths (saga-style) when later steps fail

Policy & budget enforcement: enforces depth/time/cost limits, tool scope restrictions, and human approval gates

Process memory: small shared board showing run status so humans and components see the same execution state

How it connects:

Receives ← Agent: A structured plan (steps and limits)

Sends → System: Action packages (tool + inputs + success check + undo if needed)

Receives ← System: Results to continue, branch, retry, or roll back

Sends → Data: Short summaries of what the run did

Primary contract: Action Packages (to System)

Layer 4: System — Execute and Report

Executes real-world actions and reports what actually happened.

What it contains:

Experience & access surfaces: user/admin UIs, APIs, webhooks, and command line interfaces

Execution adapters: typed connectors to business systems (CRM, email, tickets, databases, devices) with validation and side-effect policies

Transaction manager: handles idempotency, ordered execution, and coordinates compensations (saga implementation)

Outcome emitter: reports structured results with status, evidence, confidence, performance metrics, and correlation IDs

Model gateway & registry: single routing point for all model calls with cost controls, safety guardrails, and version management

Platform foundations: compute, storage, network, security controls, CI/CD pipelines, and observability infrastructure

How it connects:

Receives ← Orchestration: Action packages to execute

Sends → Orchestration: Action results (success/failure with evidence)

Sends → Data: Outcome events for learning and audit records

Prompts ↔ Humans: Approvals before irreversible steps

Primary contract: Outcomes (to Orchestration and Data)

In one line: Context → Plan → Action Packages → Outcomes → Learning. That’s how perception, reasoning, and orchestration turn intelligence into dependable action.

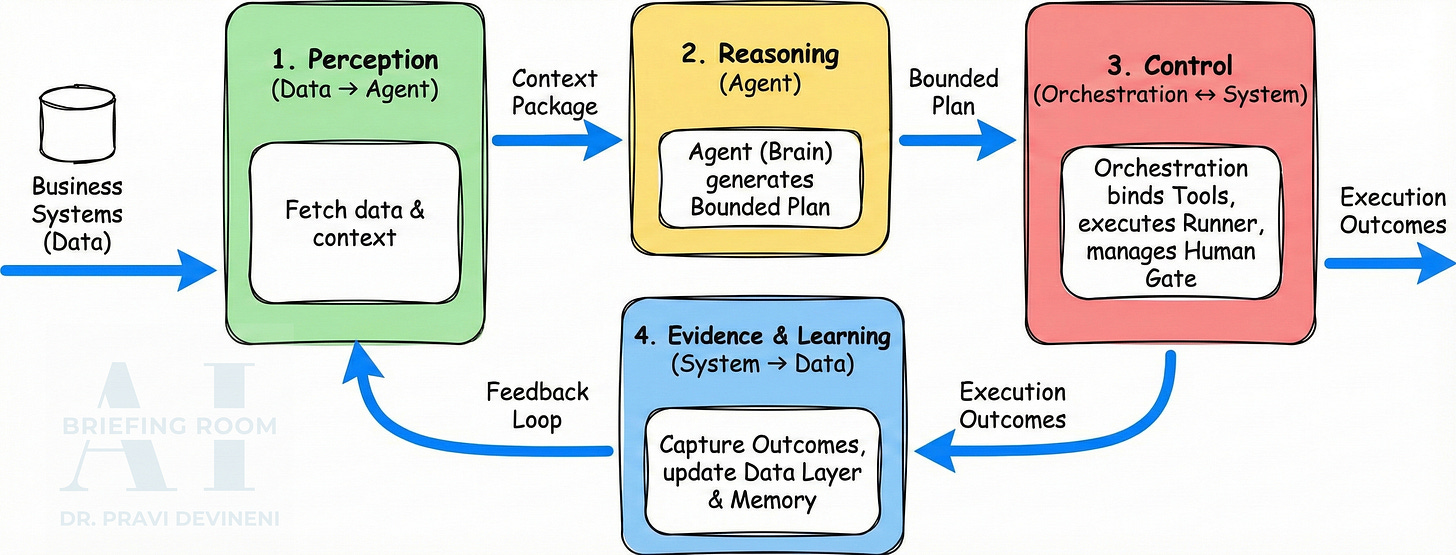

The Core Loop: How the Layers Cooperate

Example scenario: A B2B SaaS company uses an agentic system to manage customer renewals. When a contract approaches its end date, the system assesses renewal risk, checks recent customer engagement, and takes appropriate action—scheduling follow-ups for at-risk accounts or requesting discount approvals when needed.

Here’s how the four layers cooperate to accomplish this goal.

1. Perception (Data → Agent)

A request arrives. Data’s connectors normalize signals from business systems. The semantic layer reconciles identities—”Acme Corp” in billing matches “ACME Corporation” in CRM. Targeted retrieval assembles a context package:

Account: Acme Corp

Contract expiry: 42 days (source: billing, fresh: 2h)

Usage trend: -28% (source: analytics, fresh: 24h)

Last outreach: UNKNOWN (gap: CRM timeout)

Confidence: medium2. Reasoning (Agent → Orchestration)

The Agent reads context and emits a bounded plan with numbered steps, dependencies, and clear limits:

Step 1: Check last outreach

Step 2: Create follow-up task (reversal: delete_task, skip if recent)

Step 3: Request discount approval (human gate required)

3. Control (Orchestration ↔ System)

Orchestration consults its tool directory and binds each step based on capability matches:

“Check outreach” → CRMSearchTool (search capability)

“Create task” → TaskServiceTool (write capability, reversal support)

“Request discount” → ApprovalServiceTool (approve capability, human judgment)

The runner executes step-by-step. If a later step fails after step 2 succeeds, the compensation manager uses the captured task ID to reverse the change. When step 3 requires approval, a human sees the reasoning and reversal path before execution.

4. Evidence and Learning (System → Data)

System adapters execute and emit outcomes: status, result, evidence, confidence, timestamps, correlation IDs. Orchestration uses these to continue, branch, retry, or roll back. Data ingests outcomes—raw events to the historian, curated summaries to long-term memory—so the next plan benefits from what actually worked.

The point: Plans you can execute, audit, and reverse without guesswork.

Design Principles for the Base Architecture

Define explicit contracts. Name and type the four hand-offs. Version and log them.

Separate concerns strictly. Data doesn’t reason. Agent doesn’t execute. Orchestration doesn’t perceive. Each layer has one job and each state type (working, process, short-term, long-term) has one purpose.

Limit autonomy upfront. Every plan declares limits: depth, time, cost, tool scope, and stop rules. Expansion requires evidence and explicit human intervention.

Make actions reversible. Every step that changes state must declare how to reverse it. Build rollback capability in before failures occur.

Standardize tool interfaces. Group capabilities into families (search/write/approve) with consistent contracts. Route all model calls through a single gateway. This makes tools discoverable and governance enforceable.

Build audit trails. Correlate context → plan → actions → outcomes end-to-end, and log each action. This makes the system explainable when things go wrong.

In Closing

When AI acts, this Agentic AI architecture is at work—whether you see all four layers explicitly or they’re abstracted by frameworks. It solves the core challenge: letting AI act autonomously while keeping it auditable, reversible, and safe.

Data frames the moment with evidence and honest unknowns.

Agent plans with boundaries.

Orchestration binds intent to capability and keeps runs reversible.

System commits change and reports what happened.

Learning closes the loop.

This is the base pattern. Learn it once; extend it many times.

The current discourse is obsessed with AI architectures – and almost blind to the fact that the only place where a world can still become coherent is a human being.

Not “the user”, not “the stakeholder”, but potential in becoming: the infosomatic capacity that must fold noise, contradictions and overload back into a livable reality — moment by moment, under uncertainty, without guarantees.

This is the deeper reason why so many “transformations” look impressive on paper and feel hollow in practice. They expand computational capability while shrinking the human space of judgment. They automate redundancy while quietly relocating agency into procedures, dashboards, models, and outsourced inference. A civilization can run for a while like that. But it will no longer orient. It will merely execute.

Once the human is understood as the primary architecture of coherence, institutions, media and AI stop being “neutral tools”. They become secondary infrastructures that either:

• relieve subjects so autonomy can deepen and responsibility can remain meaningful, or

• overwrite inner coherence with external templates of “reality” — preselected relevance, precomputed meaning, prepackaged legitimacy.

That is why the question is not whether AI is powerful, or even whether it is “aligned” in the fashionable sense. The real question is whether the infrastructures being built still preserve the human as a coherent reference layer — or whether they turn the subject into a compliant endpoint of other people’s models.

The essay published on Meer starts exactly there. It proposes a simple, non-negotiable test for civilizational design:

Does this system strengthen or erode the human capacity to hold a coherent world under uncertainty?

🔑 Epistemic key for subscribers on Epistemic Futures:

Why coherence is not a feeling but an epistemic constraint for any serious AI and governance architecture — and why Sapiopoiesis and Sapiocracy follow logically once the human is treated as the reference layer, not an afterthought.

https://www.meer.com/de/99091-der-mensch-als-architektur-der-kohaerenz