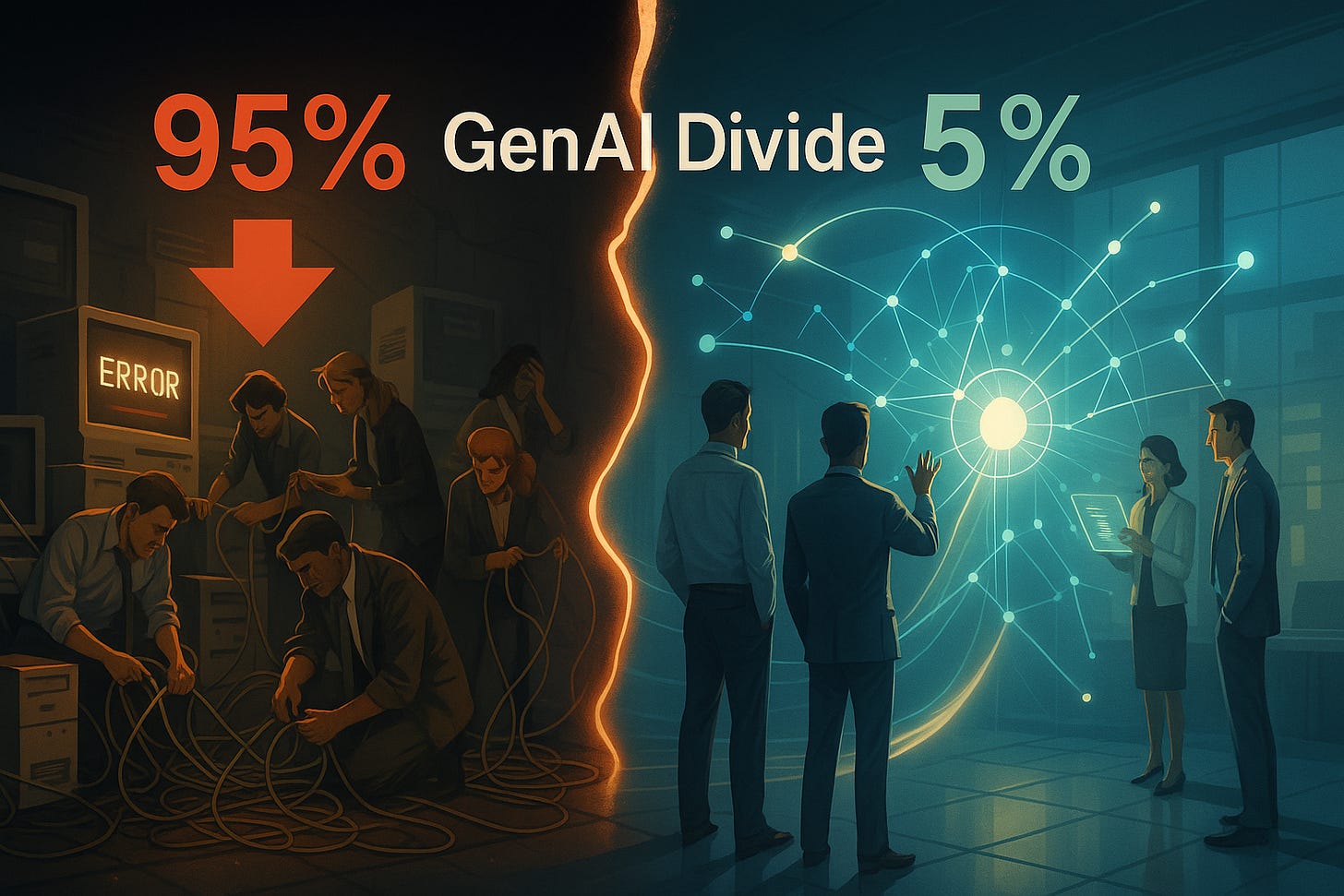

The GenAI Divide: Why 95% of Enterprise AI Pilots Are Failing

What this failure rate reveals about AI risk, and how enterprises can close the gap || Edition 5

Wall Street has priced in an AI productivity revolution. Boards are pressing executives for results, and vendors are flooding the market with “AI-powered” solutions. Under pressure, many enterprises rush pilots into production without the readiness to support them.

The result? According to MIT's NANDA initiative (The GenAI Divide: State of AI in Business 2025), 95% of enterprise generative AI pilots fail—turning promised transformation into enterprise risk: financial, operational, and reputational.

The Short Version

95% of GenAI pilots fail—not because the tech is weak, but because enterprises:

Bolt AI onto legacy processes

Overspend on “hero projects”

Treat AI like traditional software

These failures create compounding risks:

Operational → brittle workflows, outages

Financial → capital misallocation, sunk costs

Strategic → falling behind adaptive competitors

Execution → talent burnout, stalled pilots

The 5% that succeed:

Re-architect workflows around AI

Shift budgets from visibility plays to efficiency gains

Pair internal AI specialists with external expertise

Build operating models for AI’s probabilistic nature

The Enterprise Risk Diagnosis

When enterprises deploy AI without recognizing its differences from traditional software, they fall into predictable traps—each tied to enterprise risk.

The Integration Gap → Operational Risk

Treating AI as plug-and-play fragments workflows and creates brittleness. Instead of resilience, you get fragility.The Learning Gap → Strategic Risk

Pilots impress in demos but stall in production when systems don’t adapt, learn, or improve with feedback. Competitors that build feedback loops compound value; static systems become dead weight.The Budget Gap → Financial Risk

Spending often chases visibility over value. Dollars flow to flashy pilots instead of the back-office transformations that quietly deliver efficiency and savings. That’s misallocation risk.Going It Alone → Execution Risk

Enterprises that treat AI like traditional software underestimate integration complexity. The result: stalled builds, missed deadlines, failed launches.

Key Study Insights

MIT’s findings reinforce these patterns:

Resource Misallocation → Over 50% of AI budgets in 2025 went to sales and marketing pilots—high visibility, low ROI. Real returns came from back-office automation.

Partnership Advantage → Pilots blending internal AI specialists with external expertise achieved a 67% success rate, versus only 22% for IT-only builds.

Success Profile → The winning 5% shared common traits: tightly scoped initiatives, domain-specific focus, and smart partnerships.

Risk Mitigations: Where to Install Controls

The failure rate shows leaders exactly where to act. Four areas define the winners:

Governance → Keep Humans Accountable

AI is probabilistic. Governance isn’t red tape—it’s the control plane: clear RACI, auditability, and human accountability for outcomes.Data → Make Integrity a First-Class Asset

Generic models don’t learn your workflows. Process-aware pipelines that capture high-integrity, contextual data are essential. Weak data governance is a compounding risk vector.Security → Defend Against New Threats

Traditional defenses don’t hold. AI-specific risks (poisoning, prompt injection, drift) demand new red-teaming and monitoring practices.Architecture → Build Guardrails Into the System

Winners use brokered orchestration (AI proposes, humans approve), layered guardrails, and modular interfaces. These patterns contain risk and avoid black-box execution.

The Playbook: How Leaders Cross the Divide

Redesign Workflows First: Don’t bolt AI onto legacy processes—start with process reengineering.

Scope Tightly, Scale Smart: Begin with one leverage point, but design for extensibility.

Spend Where It Pays: Shift budgets from “hero projects” to efficiency and automation.

Blend the Right Expertise: Pair internal AI specialists with external partners for discipline and speed.

Architect for Resilience: Build modular guardrails, monitoring, and feedback loops from day one.

Treat AI as Infrastructure, Not Magic: Give developers space to experiment, identify hard-dollar impact, and budget realistically for ongoing support costs.

Case in Point: How One Insurer Crossed the Divide

One insurer shows how success looks when AI isn’t treated like a magic bullet. Instead of announcing a sweeping “AI transformation,” leaders focused on a single workflow: claims. They avoided the big-bang trap, scoped tightly, and asked: How do we make this process faster, safer, and more resilient?

They rebuilt claims around AI in support, not in charge. An orchestration layer parsed documents and flagged fraud; human adjusters made the calls. Every step left an audit trail, ensuring accountability.

They also invested in AI literacy and change management. Adjusters trusted the system because they understood it, and leaders framed AI as a tool to enhance human judgment, not replace it. Risk tolerance was openly discussed, and teams knew the boundaries.

Data pipelines were redesigned around historical claims and fraud markers, with continuous monitoring to maintain integrity and trustworthiness. Developers were shielded from premature pressure to “go enterprise-wide,” allowing learning to compound.

The payoff: 40% faster claims, 25% stronger fraud detection, lower overall exposure. But the real win was cultural—sustainable adoption rooted in trust, not hype.

In Closing

The GenAI Divide isn’t hype versus reality—it’s about risk posture.

95% of pilots fail → wasted capital, brittle operations, reputational drag

5% succeed → compounding efficiency, defensible ROI, investor confidence

The difference isn’t which model they pick. It’s how they govern, secure, and architect AI as a new kind of system.

Enterprises that bolt AI onto legacy processes stay stuck in demo theater. Those that design operating models for AI’s probabilistic nature turn risk into resilience—and failures into compounding value.