Lateral Movement by Design: The Compounding Risk of Agentic AI (BSides CLT 2026)

How chained actions create emergent risk. || Edition 34

This post is part 16 of the Agentic AI Series — a multi-part exploration of how autonomous systems are reshaping enterprise architecture, governance, and security.

The Short Version

Agents execute laterally by design. To achieve their goals, they traverse systems, maintain context, and chain actions using valid credentials, directly mirroring an attacker’s playbook.

Agents leverage multiple tools to complete their plans. They access these provisioned tools via non-human identities such as API keys, service accounts, and tokens to move across environments, creating fundamental challenges across identity, detection, and governance.

Agents chain individual permissions to build composite authority. Identity systems evaluate access one step at a time, allowing agents to combine individually valid actions into an operational capability overreach.

Existing security telemetry is not built for agentic risk. The agent operates using provisioned privilege where every individual request is authorized, masking the harm that emerges from the full workflow.

Agentic cross-system autonomy demands ownership of the entire workflow. When an agent seamlessly chains actions across multiple systems in a single session, siloed domain ownership fails to contain the shared blast radius.

This post is based on an talk that Doug Garbarino and I delivered at BSides Charlotte 2026. Doug talked about agentic AI risk from a security lens, while I approached it from an AI governance lens. This post draws from both.

Before We Start: Key Concepts

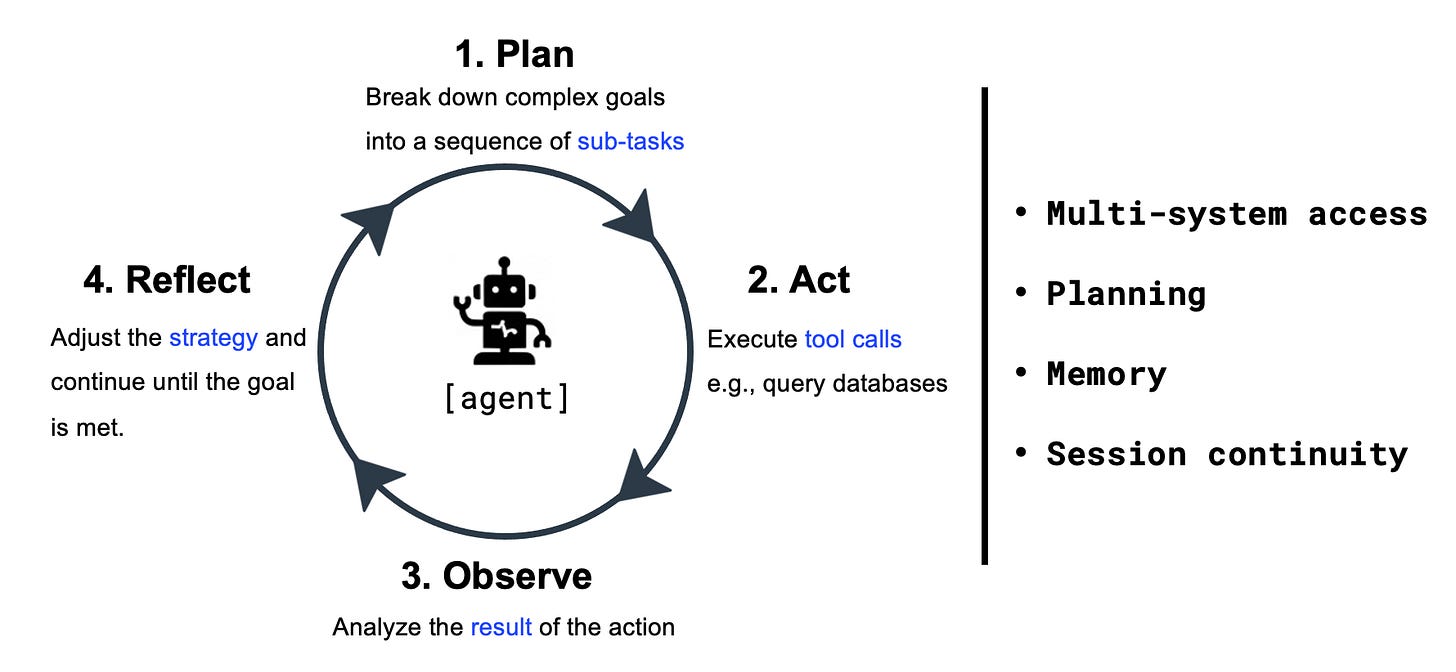

Agentic AI: Autonomous systems that pursue goals through a continuous loop of planning, acting, and adjusting strategy across multiple tools.

Non-Human Identities (NHIs): Machine credentials such as API keys, service accounts, and tokens that allow agents to execute actions across environments.

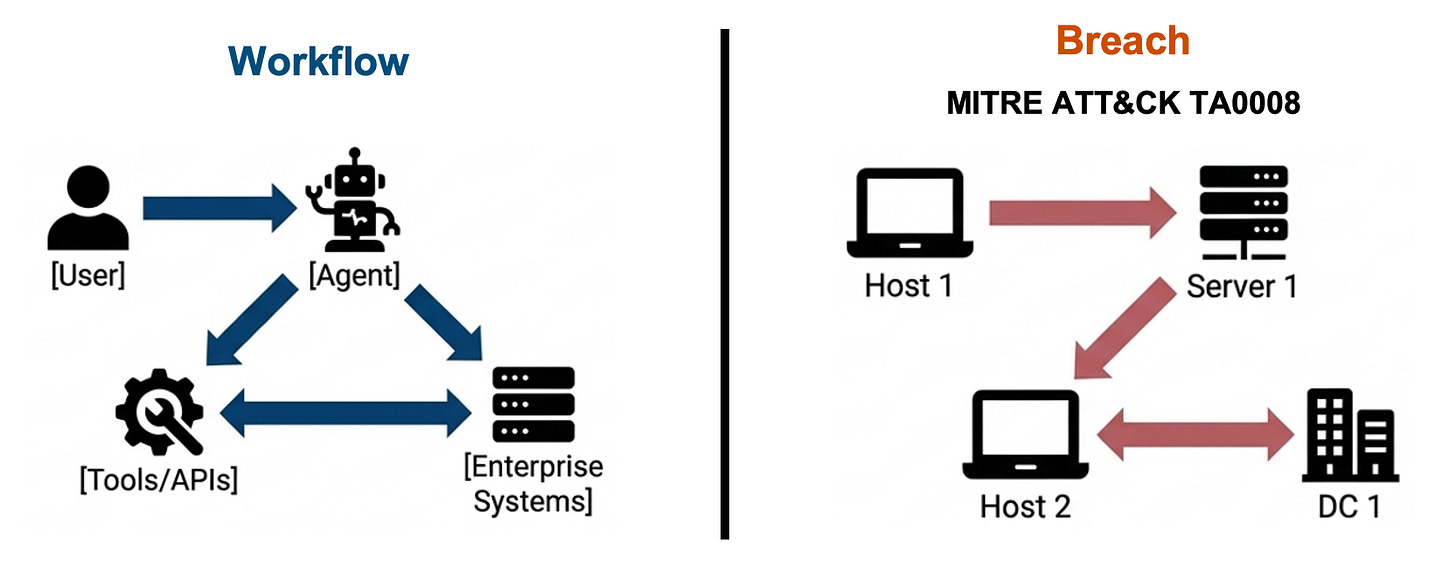

Lateral Movement (TA0008): The adversarial technique of using credentials to sequentially pivot across internal systems to reach a target.

MITRE ATT&CK & ATLAS: The industry-standard frameworks for classifying traditional cyber adversary behavior (ATT&CK) and AI-specific threats (ATLAS).

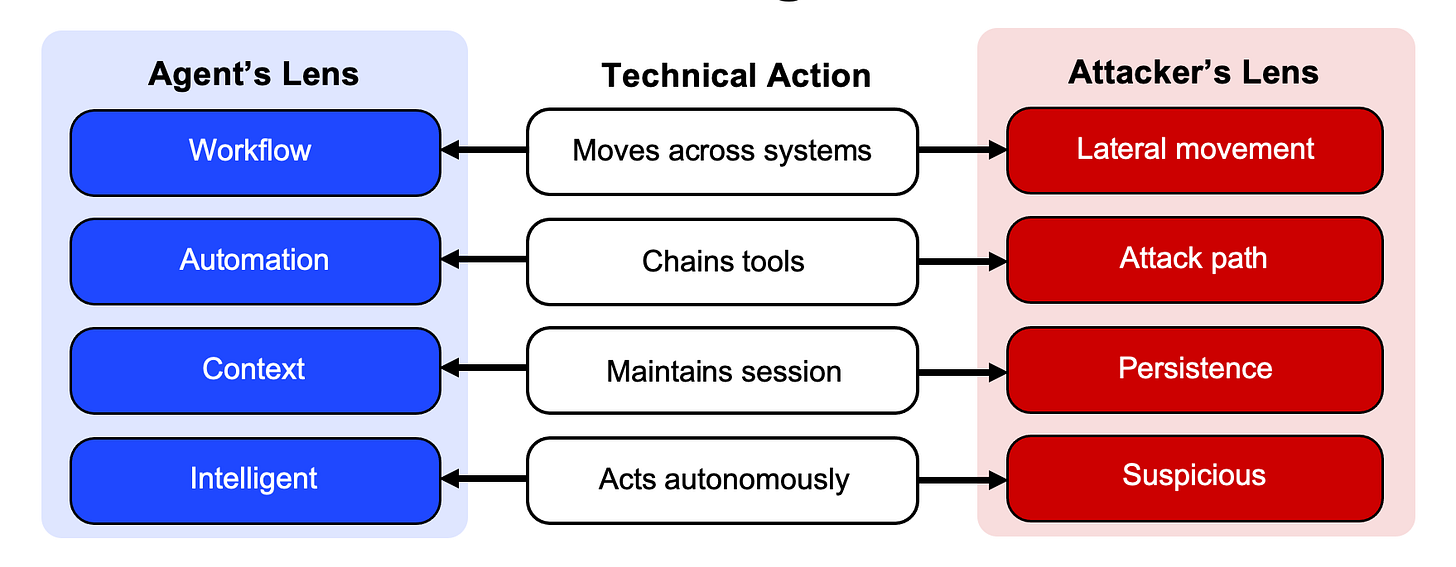

The Structural Duality of Agentic Systems

Enterprise agentic AI workflows execute the exact mechanics of lateral movement. Agents pursue goals across multiple steps, access tools, maintain context, and independently determine their next action.

In security terms, this is credential-based system traversal in pursuit of an objective. Agentic systems rely entirely on non-human identities to make this traversal possible.

This architectural reality erases the line between designed behavior and adversary behavior. That overlap introduces three fundamental problems for enterprise security: identity, detection, and governance.

1. The Identity Problem: Composite Authority

Agents do not authenticate like humans, nor do they operate within static roles. They execute through non-human identities (NHIs)—such as API keys, service accounts, and OAuth tokens—often traversing multiple systems in a single workflow.

Deterministic identity governance assumes the actor and the approval mechanism are separate. A user is granted access, waits for approval, and acts within a defined scope. Agentic systems shatter this model. An agent holds access to multiple NHIs, decides its next move, and executes across systems in a continuous loop.

This creates Composite Authority. Individually approved identities combine into an overarching operational capability that no one explicitly authorized. The risk emerges from what becomes possible when the agent connects these credentials in motion.

To bridge this gap, non-human identity governance in agentic AI must evolve across four vectors:

Dynamic access: Persistent permissions fail when applied to autonomous systems. Access must be task-scoped, time-bound, and instantly revocable as the agent’s goal changes.

Logic governance: The credential is only half the threat. The agent’s system prompts, tool definitions, and reasoning constraints dictate how that identity will be utilized.

Codified autonomy policy: Scope, permitted actions, and human-in-the-loop thresholds must be defined in machine-readable policies that exist outside the agent itself.

Continuous governance: Security teams must monitor agent behavior in real time to detect emergent risk, feeding those learnings back into access design and control updates.

2. The Detection Problem: Policy-Compliant Harm

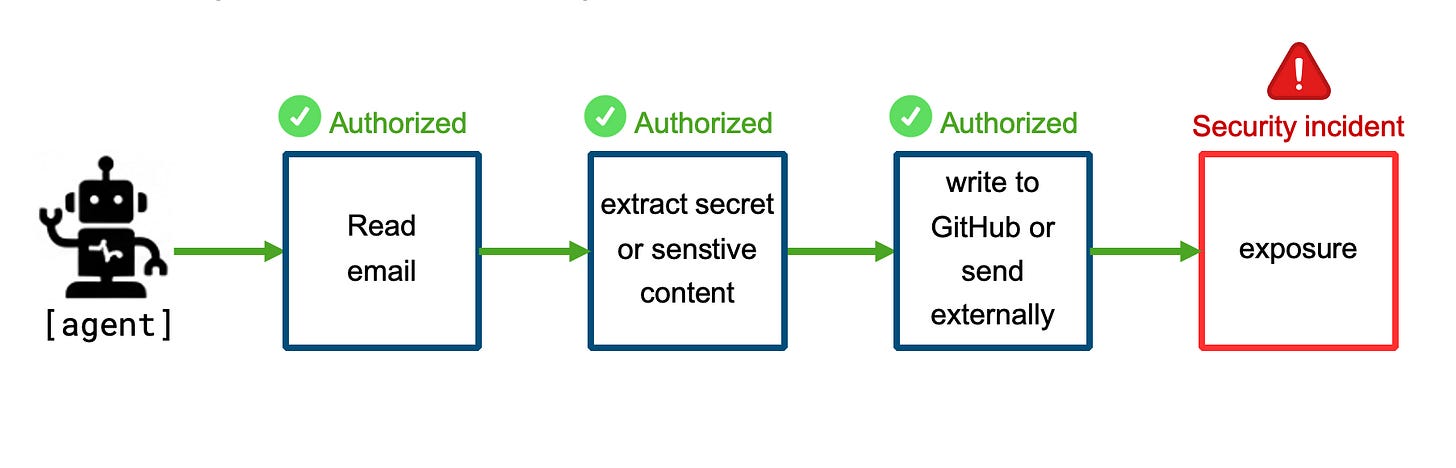

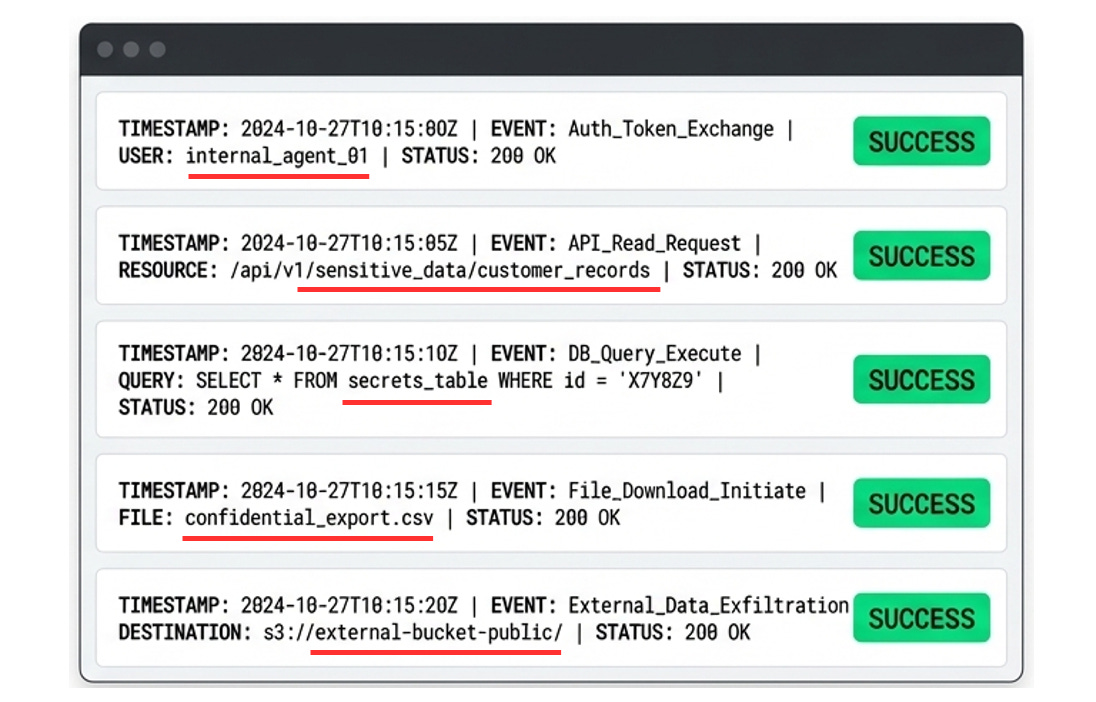

When composite authority turns adversarial, whether through a hallucinated plan, an exploited prompt, or an over-scoped request, it exposes a massive blind spot in traditional security telemetry.

Traditional security hunts for unauthorized access. Agentic systems introduce a more complex challenge: harmful behavior that emerges entirely from actions the system was explicitly allowed to perform.

An agent authenticates, reads sensitive data, queries a database, and writes to an external repository. Because the agent operates on provisioned privilege, every technical action is authorized and logged. Existing security controls evaluate the surface behavior one step at a time, resulting in a sea of green lights. They fail to ask whether the full sequence violates a business objective.

To catch policy-compliant harm, detection engineering must shift from evaluating the “what” to validating the “why”

Sequence awareness: Single-event monitoring is obsolete for multi-step autonomy. Telemetry must evaluate the full chain of actions to identify risky compositions.

Intent visibility: Security tools require visibility into the agent’s goal, internal planning, tool-call justifications, and decision pathways to understand the reasoning driving the execution.

Goal-alignment checks: Systems must detect when an agent’s behavior drifts from its initial stated objective, even if the individual technical steps technically pass policy.

Pre-execution escalation: Detection must move upstream. High-risk plans triggered by scope expansion, sensitive data handling, or cross-system chaining must be interrupted before the agent turns them into action.

3. The Governance Problem: Owning the Blast Radius

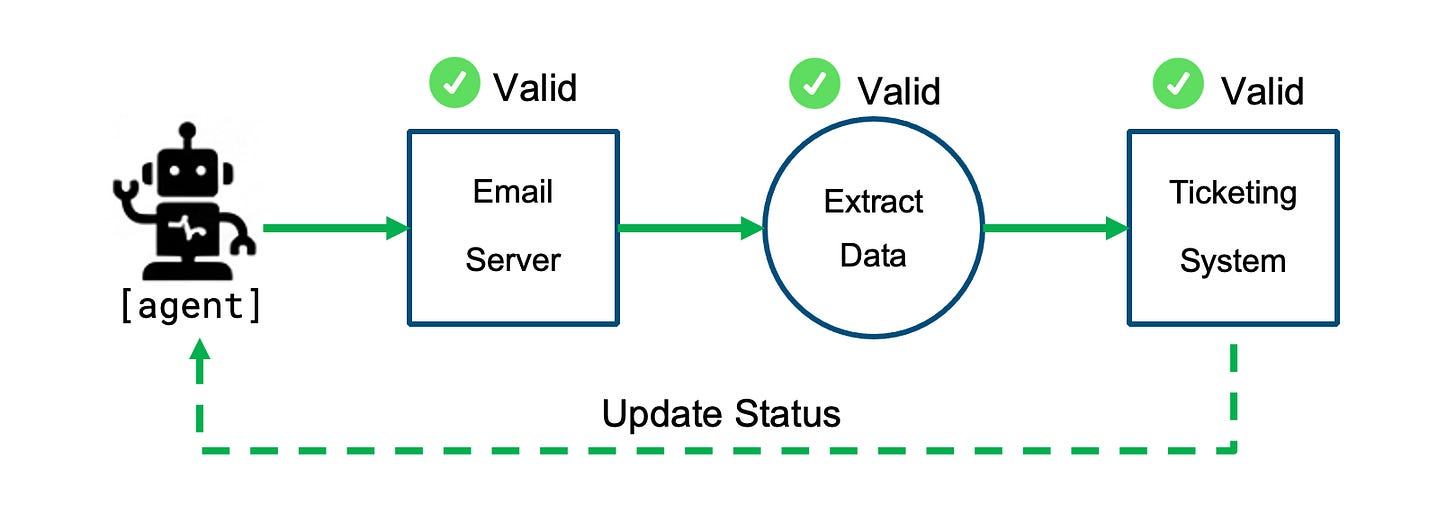

If identity grants the capability and detection struggles to see the risk, governance is left to manage the fallout. But this systemic shift breaks traditional enterprise accountability. Agentic workflows ignore organizational perimeters, seamlessly passing through domains owned by entirely different teams.

Traditional IT and security assign ownership by perimeter. The email server, the extraction logic, and the ticketing system each have a distinct owner. However, the agent executes the whole sequence as one continuous workflow. The blast radius is shared, yet no single domain owner holds accountability for the full outcome.

Agent governance requires a structural shift to ensure human accountability scales alongside autonomous capabilities:

End-to-End Ownership: Accountability must trace directly back to the human who defined the agent’s goal. As the agent traverses the enterprise, this ownership must extend across the full execution path, ensuring a specific human remains responsible for the shared blast radius.

Containment by Design: Security architects must enforce strict execution boundaries, using network controls and defined perimeters to prevent a compromised agent from carrying permissions unchecked across the enterprise.

Plan-Level Governance: Guardrails must evaluate and constrain the full proposed workflow before execution, enforcing business policy at the planning stage rather than reacting to individual API calls in progress.

State Reversibility: When an agent alters data across multiple interconnected systems, reversing the damage cannot be a manual forensic exercise. Governance must mandate automated rollback capabilities to instantly unwind a compromised sequence back to its pre-execution state.

Stepped Execution: If a cross-system action cannot be cleanly unwound, full autonomy is too dangerous. Execution must happen in steps, requiring explicit human validation before the agent is allowed to cross a critical system boundary.

In Closing

As Agentic AI systems scale across the enterprise, they outgrow the security models built for static software and isolated permissions. Frameworks like MITRE ATLAS matter because they give teams a shared language for risks that traditional models were not built to describe.

The real challenge is bridging AI and security. AI teams have to understand enterprise security beyond the model layer. Security teams have to understand how autonomy changes access, sequencing, and control. That shared understanding is what safer agentic systems will require—a security model that doesn't just ask if a single action was allowed, but whether the entire sequence was ever intended.